Why Every Marketer Needs Responsible AI Now

AI content tools are everywhere. They promise speed, scale, polish. But if you can’t spot when something’s been AI-crafted, you risk losing trust. From peer review in academia to blog posts on your brand site, transparency is key. Marketers need clear policies and smart workflows. And they need to detect AI-generated content before it erodes authenticity.

Organisations like JAMA now urge authors and reviewers to disclose AI usage, verify outputs, and keep human oversight front and centre. In marketing, that means simple checks and robust guidelines. Ready to bring clarity to your content? Unlock the future of marketing and detect AI-generated content with CMO.SO

Lessons from AI in Peer Review: Transparency and Trust

Academic publishing has led the way. Journals now require every mention of AI tools in methods, acknowledgements or text drafting. Authors must declare assistance from large language models. Reviewers are trained to watch for hallucinations, bias and misattribution. Editors insist on thorough revision and final approval by humans.

Marketers can learn from this. Set clear rules:

Always note when AI helps draft or edit copy.

Assign a human editor to validate facts.

* Clearly communicate your process to stakeholders.

This culture of transparency boosts credibility. It helps you detect AI-generated content lurking behind polished prose. And it safeguards your brand against unintended errors.

Pitfalls of Over-Reliance on AI Tools

There’s a dark side to unchecked AI. You get slick copy but risk:

Hallucinations—made-up facts or citations.

Bias—repetition of stereotypes or outdated views.

* Loss of brand voice—generic language that feels hollow.

If you can’t detect AI-generated content, errors slip through. A misplaced date, a wrong statistic, even a misleading claim. Your audience notices. Engagement dips. Trust evaporates. A little due diligence stops that cascade. A dash of human insight goes a long way.

Best Practices for Ethical AI Content Creation

You’re not fighting AI, you’re steering it. Here’s how:

1. Draft an AI policy. Define allowed and forbidden uses.

2. Train your team on tool limits and quirks.

3. Use human review as non-negotiable sign-off.

4. Leverage peer feedback—share drafts internally.

CMO.SO makes this seamless. With one-click domain submissions and auto-generated SEO blogs, you can focus on strategy. Plus, the community-driven learning and engagement model means you see real-world examples of both good practice and common pitfalls. When everyone’s aligned, you’ll spot off-key phrases and detect AI-generated content before publication.

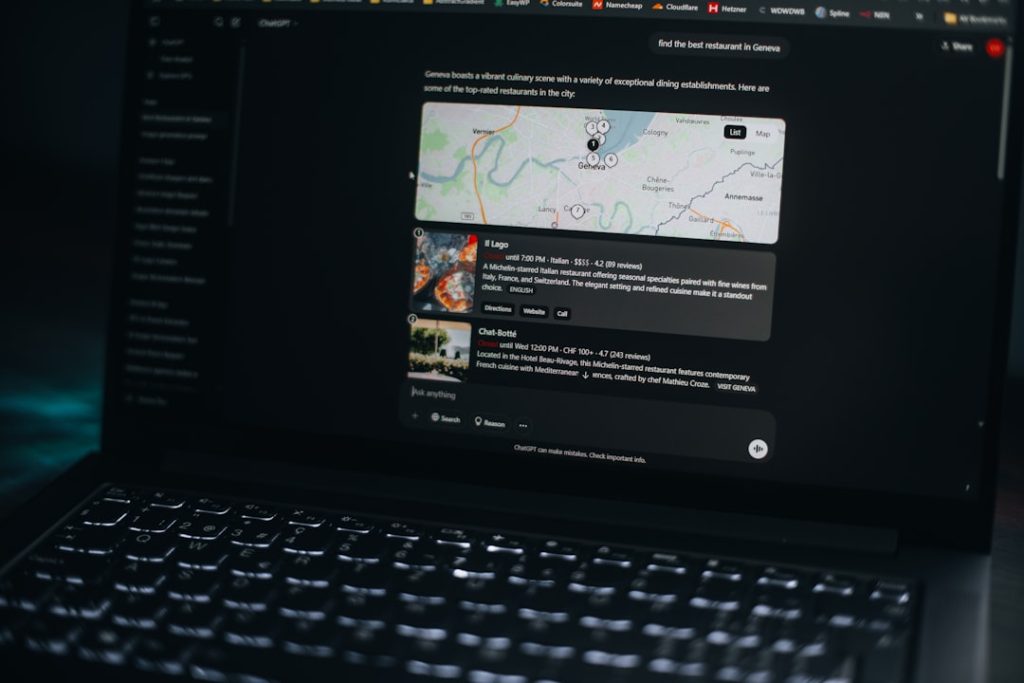

Practical Techniques to Detect AI-Generated Content

Many of today’s AI detectors use statistical analysis, linguistic fingerprints and metadata checks. You can also:

Compare sentence complexity to known human writing.

Check for excessive uniformity or overuse of filler phrases.

* Use stylometry—analyse writing style against past pieces.

For deeper insight, CMO.SO’s Generative Engine Optimisation (GEO) tracks your content’s performance and flags inconsistencies. And the AI Optimisation (AIO) feature highlights where tone or cadence deviates from your norm. With these tools you can swiftly detect AI-generated content and maintain that genuine, human touch.

Empower your team to detect AI-generated content using CMO.SO

Real-World Case Study: Upholding Authenticity in Practice

A UK-based SME faced a dilemma. They’d trialled AI writing assistants to scale up blog output—but reader feedback plunged. Comments felt generic. Bounce rates rose. They needed a solution that balanced speed with authenticity.

By adopting clear guidelines inspired by academic peer review, and using CMO.SO’s GEO visibility tracking, they spotted AI-style passages within minutes. Editorial teams flagged awkward phrasing and factual gaps. The brand regained its unique voice and saw a 20% rise in engagement within weeks.

When you have a process and the right checks, you not only detect AI-generated content but boost reader loyalty too.

Conclusion: Embracing Responsible AI to Boost Your Brand

AI in content creation is here to stay. But without guardrails, it can backfire. By learning from peer review, setting tough standards and using smart tools, you maintain authenticity. When you detect AI-generated content early, you protect your brand’s credibility and your audience’s trust.

The future favours marketers who balance innovation with integrity. Keep human insight at the heart of your process. And don’t let unchecked algorithms steer your narrative off course.

Learn how to detect AI-generated content effectively with CMO.SO

What Marketers Are Saying

“CMO.SO helped us set clear AI guidelines and the GEO tracking feature made it trivial to spot off-brand text. Our readers noticed the difference instantly.”

— Sophie P., Content Director at GreenLeaf Marketing

“The community feedback loop is a game of spot-the-difference. We learn from others’ edits and catch AI-style slips fast. Our blog feels more genuine than ever.”

— Liam T., Founder of Artisan Crafts Co.

“Detecting AI-generated content used to feel like guesswork. Now we have solid metrics and peer insights. Our engagement is up 30%, and our voice stays uniquely ours.”

— Rachel K., Head of Digital at Nimbus Events