Introduction: Why Safe Content Generation Is Non-Negotiable

In a world where every tweet, update or comment can reach thousands in seconds, safety isn’t optional. Automated microblogging tools promise volume and speed, but they also carry risk. Without robust filters, you could publish harmful text or images by accident. That’s where safe content generation steps in.

From compliance headaches to brand reputation nightmares, unmoderated feeds can spiral fast. You need a system that flags hate speech, violent content and adult themes before they go live. Enter CMO.so’s AI-driven moderation. It scans text, images and even user prompts, so you stay in control. CMO.so: Safe content generation for automated microblogs

Whether you’re a one-person team or a small agency, you deserve tech that does the heavy lifting. Let’s explore best practices for automated microblogging, and how CMO.so makes safe content generation a breeze.

Why Safe Content Generation Matters in Automated Microblogging

Imagine this: you schedule a week’s worth of updates and walk away. When you return, a misclassified phrase has landed in your feed—and now the brand is trending for the wrong reasons. Automated processes can miss context. AI can misinterpret slang or regional expressions. That’s why safe content generation is the foundation of any automated microblogging strategy.

Key risks without moderation:

- Reputation damage through unintentional offence

- Regulatory fines for non-compliant posts

- Loss of audience trust and engagement

- Hidden SEO penalties from flagged content

Safe content generation isn’t about over-policing. It’s about letting your team focus on creativity, while AI handles the guard rails. You get high-volume posting with zero compromise on quality.

Key AI-Driven Moderation Techniques

At the heart of safe content generation lie several AI techniques. Here’s how modern systems stay one step ahead of harmful posts:

1. Text Analysis and Filtering

AI models trained on vast datasets spot hate speech, self-harm cues and adult content. They assign severity levels—low, medium, high—so you decide on automated removal or a human review.

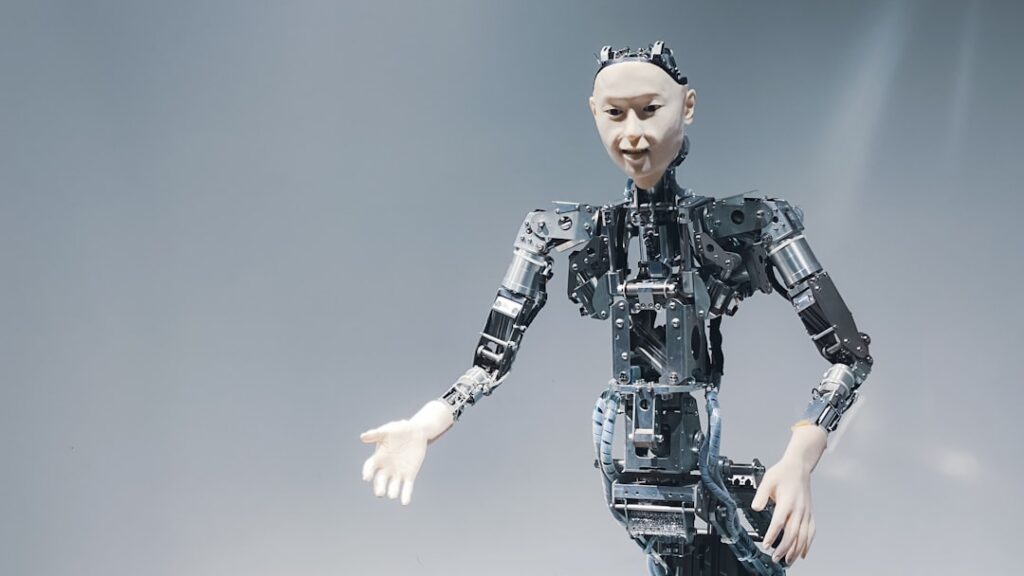

2. Image Moderation

From blood to explicit scenes, computer vision APIs classify images with multi-severity tags. Uploads flagged as risky get quarantined or sent for manual checks.

3. Custom Blocklists

Generic filters aren’t enough. You can import your own blocklists—specific slurs, brand busters or competitor names—to catch niche threats.

4. Workflow Automation

Set up trigger-based workflows. For example, posts tagged “high severity” get automatically held back. Low-level flags can sail through with a quick audit.

Each layer reduces false positives and ensures your microblogs stay safe, relevant and on-brand.

Building a Scalable Moderation Workflow with CMO.so

Scaling safe content generation means more than throwing models at the problem. You need a platform that integrates seamlessly with your microblogging channels. CMO.so ticks every box:

- No-code setup: Define policies with a few clicks.

- Automated performance filtering: Analyse post engagement and hide underperformers—while flagged posts never see daylight.

- Geo-targeted optimisations: Localise filters for region-specific sensitivities.

- Hidden-post indexing: Low-performing drafts stay hidden from users but remain indexable by Google.

By blending these features, CMO.so delivers end-to-end moderation. You schedule, AI scans, analytics report—and you sleep soundly.

Best Practices for Implementing AI Content Safety

It’s not just about having the tech. You need a playbook. Here are tried-and-tested steps:

- Define clear content policies

“No hate, no adult content, no self-harm encouragement.” - Calibrate sensitivity levels

Adjust AI thresholds to balance false positives and oversights. - Review edge cases manually

Keep a small team for ambiguous or high-stakes flags. - Monitor key metrics

Track block rate, language distribution, latency and false positive trends. - Iterate based on feedback

Regularly update blocklists and custom categories.

With these in place, your automated microblogging stays nimble, accurate and compliant.

Mid-Article Checkpoint

Need a turnkey solution for safe content generation? CMO.so stitches moderation, SEO and GEO-targeting into one dashboard. Ensure safe content generation with CMO.so’s intelligent moderation

Overcoming Common Moderation Challenges

Even the best systems face hurdles. Here’s how to conquer them:

- False positives on slang

Solution: Maintain an evolving whitelist and train models on local dialects. - Evolving imagery trends

Solution: Use rapid custom categories for emerging harmful patterns. - Multilingual content

Solution: Prioritise languages your audience uses most, then expand coverage. - User-generated prompts hitting LLMs

Solution: Implement prompt shields that detect hostile inputs before they reach the generator.

By anticipating these challenges, you make safe content generation a living, breathing process.

Future Trends in AI Moderation

Brace for the next wave of content safety:

- Real-time dialogue monitoring

Instant chat moderation for customer support and community forums. - Groundedness detection

Ensure AI responses stay close to your verified sources. - Augmented human workflows

AI suggests decisions; humans approve them in seconds.

CMO.so is already prototyping these features. Stay tuned for more ways to keep your microblogs safe and smart.

Conclusion

Safe content generation isn’t a luxury—it’s your digital shield. Without it, even the smallest brand can stumble into controversy. By combining robust AI models with intuitive workflows, CMO.so empowers you to scale microblogging without fear. From text filters to image scans and custom blocklists, every layer works to keep your posts clean, compliant and engaging.

Ready to see safe content generation in action? Experience safe content generation with CMO.so today